Large Language Models

- Artificial Analysis has a great breakdown of LLM quality vs cost vs latency vs speed

- (mini-omni2)[https://github.com/gpt-omni/mini-omni2] is a project to make something like GPT-4o with multimodal and speech duplex capability

Subtopics

Projects

Notes

Tools

- H2OGPT Local web-based interface for chatting with document db indexes etc.

- Lit-GPT - Pretrain, finetune, deploy 20+ LLMs on your own data. Uses state-of-the-art techniques: flash attention, FSDP, 4-bit, LoRA, and more.

- Instructor Embedding - Embedding specifically adapted to instructions

Finetuning

Finetuning delivers better results than RAG, but requires a training step and data prepared in the right format.

Tools

- Axolotl - Axolotl is a tool designed to streamline the fine-tuning of various AI models, offering support for multiple configurations and architectures.

Scaling Laws

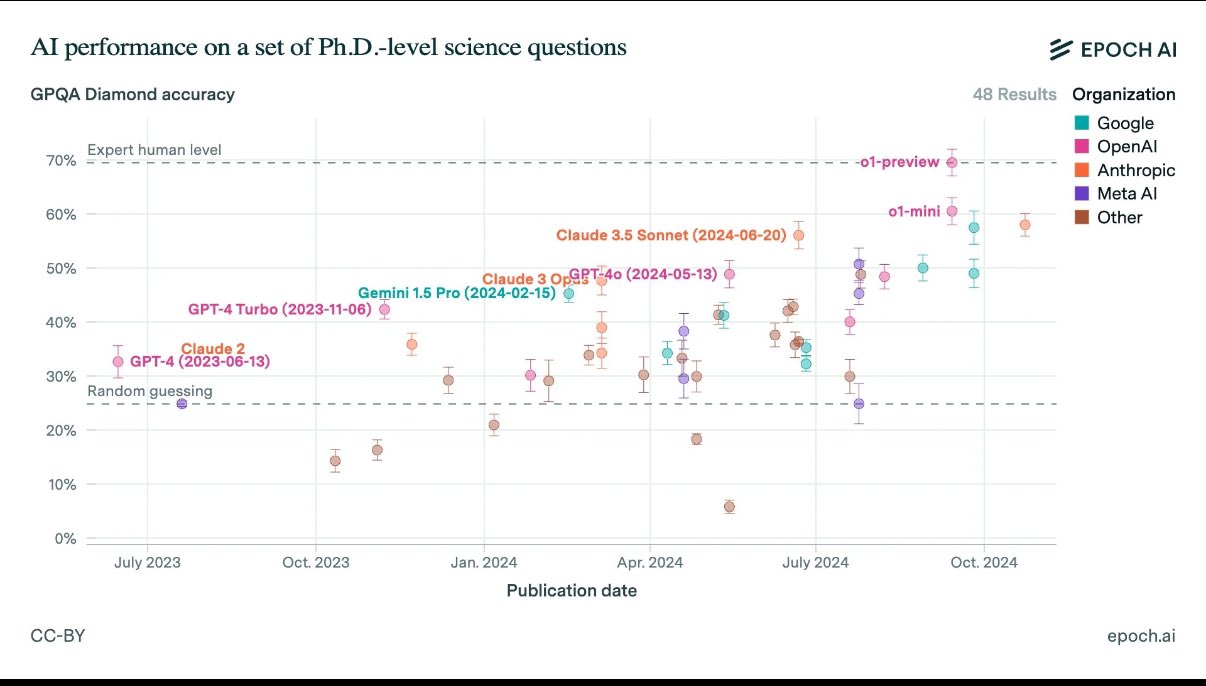

Performance improvements 2023-2024:

gwern on the point of o1 in the perspective of the scaling laws